| AI Wins At Rubik's Cube With Just One Hand |

| Written by Mike James |

| Saturday, 19 October 2019 |

|

A better headline would have been "with one hand tied behind its back" but this robot doesn't have another hand and no back you speak of. It does however have two neural networks and can solve the cube using just one hand. What is the importance of this work? We keep seeing examples of neural networks solving difficult problems, but mostly simulations or game playing. It must have occurred to you that a really interesting application would be to put a neural network into a robot and see how well it walks or runs or whatever. This is what has now been tried at OpenAI. In this case the real world component is a human-like robotic hand. Just the one.

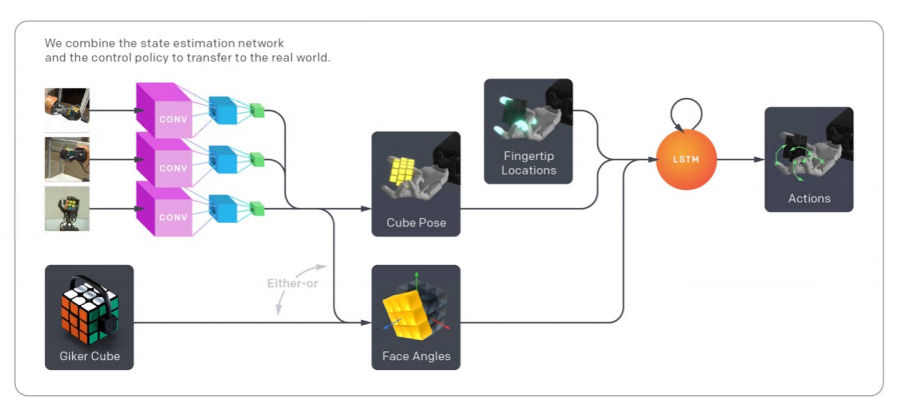

The architecture of the system includes three vision networks to determine the position of the cube and a recurrent network to control the hand:

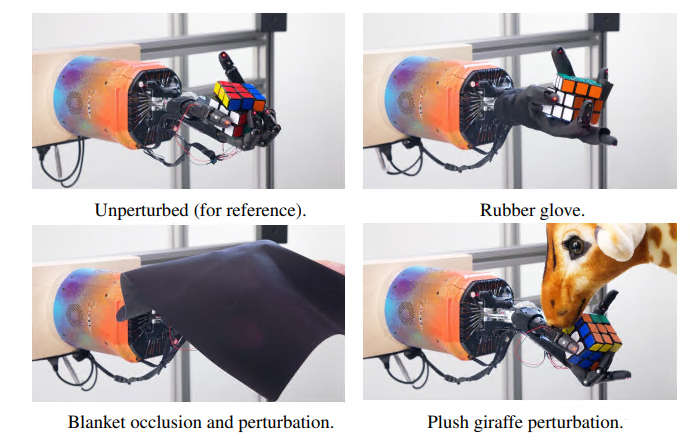

Of course, training a network via a real hand would take far too long for the tens of thousands of repetitions it needs and this was achieved via a simulation. The neural network controlling the hand was trained using reinforcement learning but in a simulated environment. The problem with simulated environments is that they generally lack the variation encountered in the real world. The solution, proposed by the team at OpenAI, is ADR - Automatic Domain Randomization. Instead of just varying the problem slightly, the parameters of the simulation were changed i.e. not just the scrambling of the Rubik's cube, but the dynamics. At first the environment is fixed and the robot learns to manipulate the cube. After this initial training the randomization starts - the size of the cube varies slightly, the dynamics of the hand are changed and so on. This makes the system learn a robust, and hopefully generalizable, solution. To see if the learning is applicable to the real world, the problem was transferred to real hardware and that hardware was tested in a range of non-ideal situations. The robot hand equivalent of kicking the robot dog includes putting a rubber glove on, tying two fingers together, poking the cube with a stuffed giraffe and so on. I didn't make the giraffe part up.

There is a slick video explaining the ideas, but I think this uncut video showing the hand in action is more impressive, and remember as you watch it none of this behavior was programmed:

Now watch the slick presentation video, it too is interesting:

It's not perfect and the robot only solves the cube 60% of the time and only 20% of the time for a maximally difficult scramble, so we don't have to worry that it is going to beat humans at the moment. What is more important is that neural networks and real robots are working together and reinforcement learning with simulations seems to be the way to make it happen. As the research paper concludes: "In this work, we introduce automatic domain randomization (ADR), a powerful algorithm for sim2real transfer. We show that ADR leads to improvements over previously established baselines that use manual domain randomization for both vision and control. We further demonstrate that ADR, when combined with our custom robot platform, allows us to successfully solve a manipulation problem of unprecedented complexity: solve a Rubik’s cube using a real humanoid robot, the Shadow Dexterous Hand. By systematically studying the behavior of our learned policies, we find clear signs of emergent meta-learning. Policies trained with ADR are able to adapt at deployment time to the physical reality, which it has never seen during training, via updates to their recurrent state. "

More InformationSolving Rubik's Cube with a Robot Hand https://openai.com/blog/solving-rubiks-cube/ Related ArticlesAI Learns To Solve Rubik's Cube - Fast! A Robot Learns To Do Things Using A Deep Neural Network Rubik's Cube Is Hard - NP Hard Rubik's cube - the order of God's Number 17x17x17 Rubik Cube Solved In 7.5 Hours To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

| Last Updated ( Wednesday, 21 July 2021 ) |