| Tactical Pentesting With Burp Suite |

| Written by Nikos Vaggalis | |||

| Monday, 30 November 2015 | |||

Page 1 of 2 Pentration testing can be thought of as a form of necessary internal hacking if we are going to keep the websites we develop secure from outside attacks. Nikos Vaggalis enrolled in a webinar from Secure Ideas to discover the capabilities of Burp.

You're certainly aware of the horror stories of passwords, credit card details or identities being stolen, or all of your on-line activity being monitored. This can happen to anyone, anytime through the exploitation of web based vulnerabilities which further down the road could potentially act as a conduit to breaking into systems. After access is achieved, anything and everything is at risk. The Need For PentestingFrom the moment on a site is released to the public web, the clock is ticking, awaiting the first hacking attempt against it. Therefore, in the face of adversity, we should stand prepared having identified and closed down any potential security issues prone to exploitation. This can only happen through the process of penetration testing. Thus, Pentesting, once the preserve of the few and elite, nowadays in this era of IoT should no longer be a restricted practice but become a commodity common to anyone involved in the building of a web-based product.

So what better way to kickstart our education than hearing from the professionals in this field? Even better, watching them doing their magic using the Swiss army knife of the pentesting field, Burp Suite? Enter Secure Ideas, a security/pentesting firm which offers a 2 hour long webinar, covering the need for a quick introduction to the look and feel of the tool as well as providing a high level overview of the workflow. It looked likely to meet our requirements making it an easy decision to pay the $25 fee for attendance. Based on the observations gathered from the webinar, here's a synopsis of both the webinar and Burp including some of the useful hints that were presented.

The Why and How of Using BurpMost people know of Burp as a proxy that captures web traffic and that is partially true. In reality, it helps to visualize Burp as a suite,a collection of tools that must be combined for achieving a successful penetration. The webinar started with a quick talk on the consequences of having vulnerabilities exploited, and by outlining the reasons of preferring the manual probing over the automated one with the use of scripts. Hint: Automated scripts make it easy to misinterpret the results of the probe. The presenters then established the value of Burp in comparison with the many alternative frameworks, arguing that Burp wins hands down due to it's completeness and intuitiveness of its UI Next. the ways of launching the Java-based executable and the resources in RAM your machine ought to have for enjoying a smooth experience were outlined. The User Interface

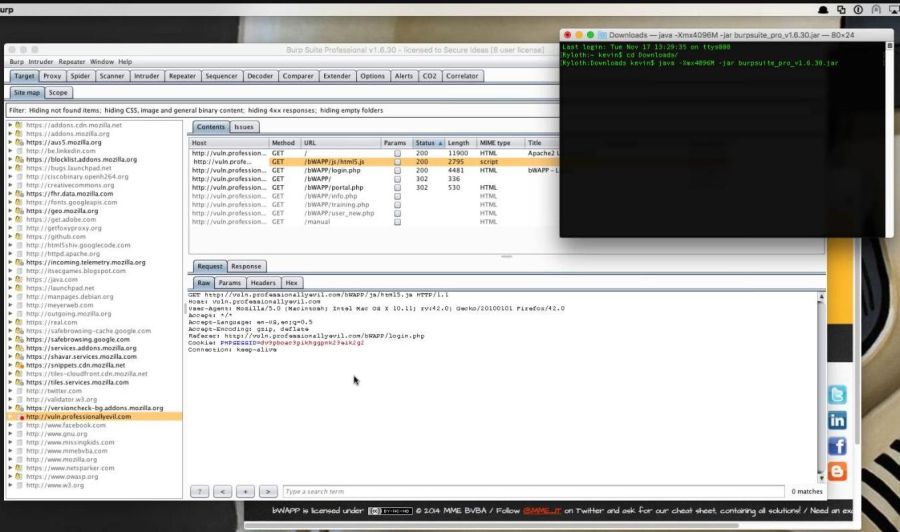

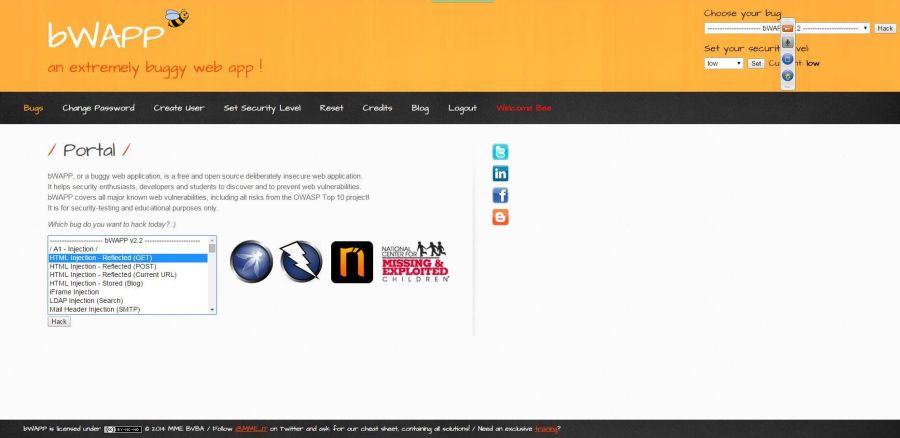

(click in image to enlarge) Then we started going through the UI, at which point the instructors, Kevin Johnson and Jason Gillam, were quick to draw the audience's attention to a variety of UI tabs and check-boxes, choices that can greatly affect the Suite's performance and functionality - so much so that it raises the question of whether some of them should be made defaults. The Vulnerable WebsiteFor this live session's needs and for demonstrating the tool's capabilities, a specially crafted app, called bWAPP, was brought into play. bWAPP is an open source project that provides an "extremely buggy web app" as an educational resource that is deliberately vulnerable and waiting to be hacked.

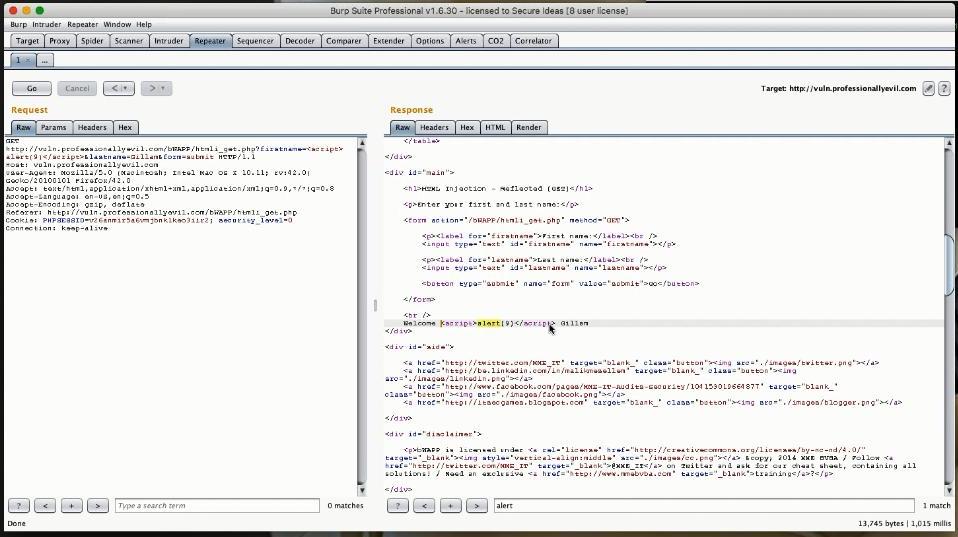

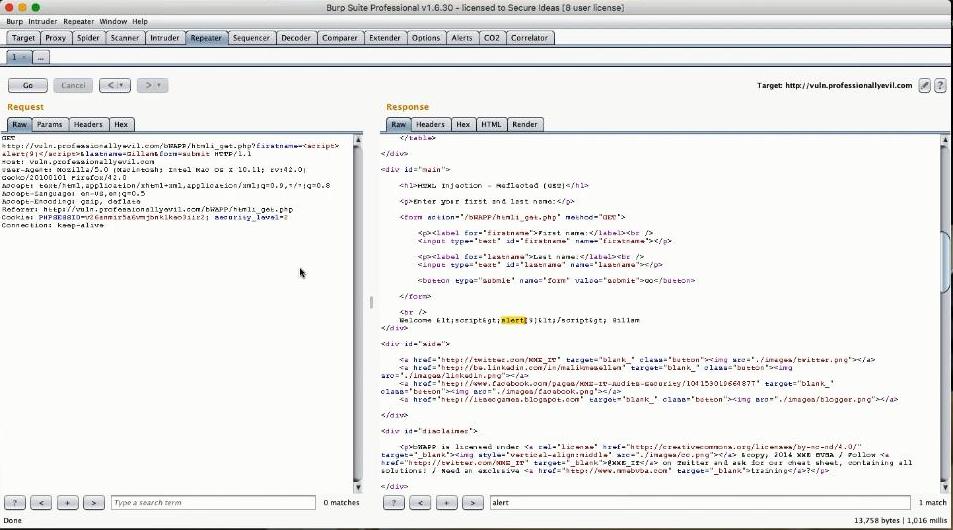

The instructors chose to hack it by using Reflected GET XSS (it does not matter which method you choose at this point), so that by observing the traffic and the data exchange between the site and our Proxy, we could identify potential exploitation vectors. After the vulnerabilities were identified and their payloads loaded at the designated positions, the request,which now carried the altered input data,was replayed. This all happened by means of the very first side tool introduced, the Repeater, so named for obvious reasons.... The RepeaterSo a captured request of the type:

The payload varies according to the attack vector. So if for example we wanted to test for SQL injection instead, we would construct a payload such as firstname=' or '1'='1'#

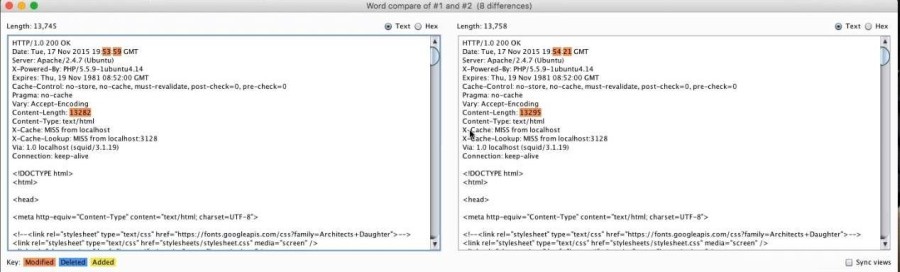

As a side note, the atmosphere created by the two instructors was relaxed, funny and easy going.The chat window was enabled for all participants to enter queries, which were immediately answered either by another member of the audience or by the instructors themselves. In terms of technical delivery, a few hiccups with broken sound were experienced at the beginning of the session, but later on things normalized and the resulting quality was good and without lag. The ComparerWe then were introduced to the Comparer which is a diff tool. The workflow involved capturing a request's response, altering and re-sending the request, capturing the response again. Both responses were then sent to the Comparer which diffed/compared them and highlighted the differences found. The example had the instructors altering a Cookie property called 'security_level' which according to the level requested, 2 being the strictest, adjusts the security precautions the site takes. So the original request had a level of 0 but the next request was set to 2, resulting in a response that varied slightly from the original. It is obvious that there was mutation since the Comparer highlighted, side by side, the Content-Length headers of both responses (for level 0 and level 2) to indicate that their size differed(13282 vs 13295).

Scrolling through the responses we found the actual reason that triggered this variation. The original response was: while the altered one was: Welcome <script>alert(9)</script>Gillam Because of the change in the security level it had anticipated a HTML injection XSS hence escaped the special HTML characters.

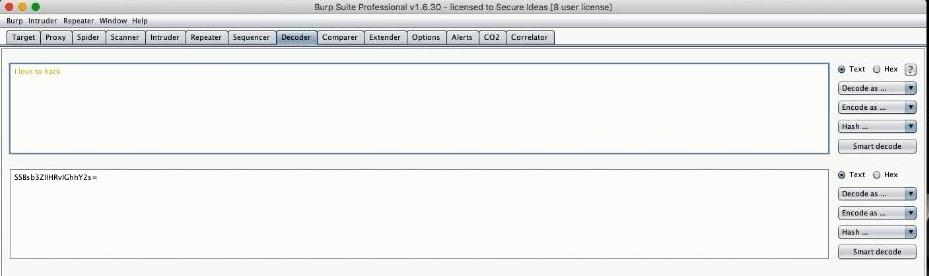

The DecoderWe then were introduced to the Decoder, a utility that does encodings and decodings in a variety of formats, such as base64,URL etc. What's interesting is that it can encode or decode in multiple layers! So for example,encoding the string I love to hack into base64 results into SSBsb3ZlIHRvIGhhY2s=this can then further be encoded in say URL percent encoding, resulting into : SSBsb3ZlIHRvIGhhY2s%3D,thus filtered through 2 levels of encoding. (If you actually try to encode the string SSBsb3ZlIHRvIGhhY2s= in URL percent encoding within the Decoder, you get a different and much longer URL since the Decoder encodes all safe and unsafe (according to RFC3986) characters and not just the unsafe ones.)

The example involved receiving a cookie encoded in base64 which Jason then decoded into its actual textual representation. |

|||

| Last Updated ( Monday, 30 November 2015 ) |