| Finite State Machines |

| Written by Mike James | ||||

| Thursday, 18 January 2018 | ||||

Page 2 of 3

Grammar and machinesNow you can start to see why we have moved on from grammar to consider finite state machines. The structure of the machine corresponds to sequences that obey a given grammar. The question is which grammars correspond to finite state machines? More to the point, can you build a finite state machine that will accept any family of sequences? In other words, is a finite state machine sufficiently powerful to generate or accept sequences generated by any grammar? The answer is fairly easy to discover by experimenting with a few sequences. It doesn’t take long to realise that a finite state machine cannot recognise a palindromic sequence. That is if you want to recognise sequences like AAABAAA where the number of As on either side of the B has to be the same – then you can’t use a finite state machine to do the job.

If you want to think about this example for a while you can learn a lot. For example, it indicates that a finite state machine cannot count. You may also be puzzled by the earlier statement that you can build a finite state machine that can "remember" any past history. Doesn't this statement contradict the fact it cannot recognize a palindromic sequence? Not really. You can build a finite state machine that accepts AAABAAA and palindromes up to this size but unless you build the machine to do it won't recognize AAAABAAAA. Any finite state machine that you build will have a limit on the number of repeats it can recognize and so you can always find a palindromic sequence that it can't recognize. The point here isn't that you can' build a finite state machine that can recognize palindromes but that you can't do it so that it recognizes all palindromes. A finite state machine can count after all but only up to fixed maximum. There are lots of other examples of sequences that cannot be recognised by a finite state machine but can be parsed or specified by a suitable grammar. What this means is that finite state machine corresponds to a restricted type of grammar. The type of sequence that can be recognised by a finite state machine is called a ‘regular sequence’ - and yes this is connected to the regular expressions available in so many programming languages. A grammar that can parse or generate a regular sequence is called a regular or ‘type 3 grammar’ and its rules have the form: <non-terminal1> -> or <non-terminal1> -> symbol Other grammarsNow that we know that regular grammars, finite state machines and the sequences, or languages, that they work with do not include everything, the next question is what else is there? The answer is that there is a hierarchy of machines and grammars, each one slightly more powerful than the last. This hierarchy was first identified by Noam Chomsky who was interested in the grammar of natural language. He wanted to find a way to describe, analyse and generate language and this work is still under development today. So what is the machine one step more powerful than a finite state machine? The answer is a ‘push down machine’. This is a finite state machine with the addition of a push down stack or Last In First Out (LIFO) stack.

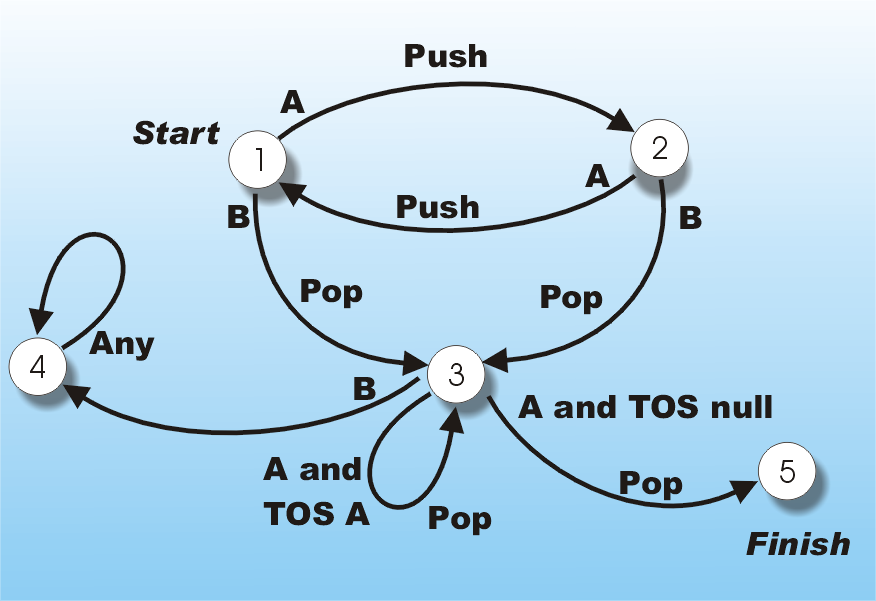

On each state transition the machine can pop a symbol off the stack or push the input symbol onto it. The transition that the machine makes is also determined by the current input symbol and the symbol on the top of the stack. At first sight the push down machine doesn’t seem to be any more powerful than a finite state machine – but it is. The reason is it more powerful is that while its stack only has finite memory it has a finite but unbounded memory, which can grow to deal with anything you can throw at it. For example, a push down stack machine can accept palindromes of the type AAABAAA where the number of As on each side of the B have to be the same. It simply counts the As on both sides by pushing them on the stack and then popping them off again after the B. Have a look a the push down machine below – it recognises palindromes of the type given above. If you input a string like AAABAAA to it then it will end up in the finish state and as long as you have used up the sequence, i.e. there are no more input symbols, then it is a palindrome. If you have symbols left over or if you end up in state 4 it isn’t a palindrome.

A palindrome detector (TOS =Top Of Stack)

So a push down machine is more powerful than a finite state machine. It also corresponds to a more powerful grammar – a ‘context free grammar’. A grammar is called context free or ‘type 2’ if all its rules are of the form: <non-terminal> -> almost anything The key feature of a context free grammar is that the left-hand side of the rule is just a non-terminal class. For example, the rule: A<S1>-> A<S1>A isn’t context free because there is a terminal symbol on the left. However, the rule: <S1>->A<S1>A is context free and you can see that this is exactly what you need to parse or generate a palindrome like ABA with the addition of: <S1>->B which is also context free. <ASIN:1405111879> <ASIN:0195374045> |

||||

| Last Updated ( Tuesday, 24 August 2021 ) |