| AI Builds Lego From The Manual |

| Written by Lucy Black | |||

| Sunday, 07 August 2022 | |||

|

AI seems to be taking over all the pleasures. You struggle for hours to build that Lego model, but now AI can do the job for you in no time at all and so robs you of all your fun... Building Lego models from the standard step-by-step instructions is something that a lot of people find enjoyable and you can argue that it is a precursor to programming - and to building flat pack furniture, but that's another torment. In case you have never followed a Lego manual before - really? - it is worth saying that they are pictorial. You get a set of pictures that show you the sequence of blocks that have to be added to make the model. This is a very simple graphical programming language where you are the computer. In practice it works well, as long as you can find the block you need and it is difficult to get it wrong except in extreme cases where there is some ambiguity in the diagram.

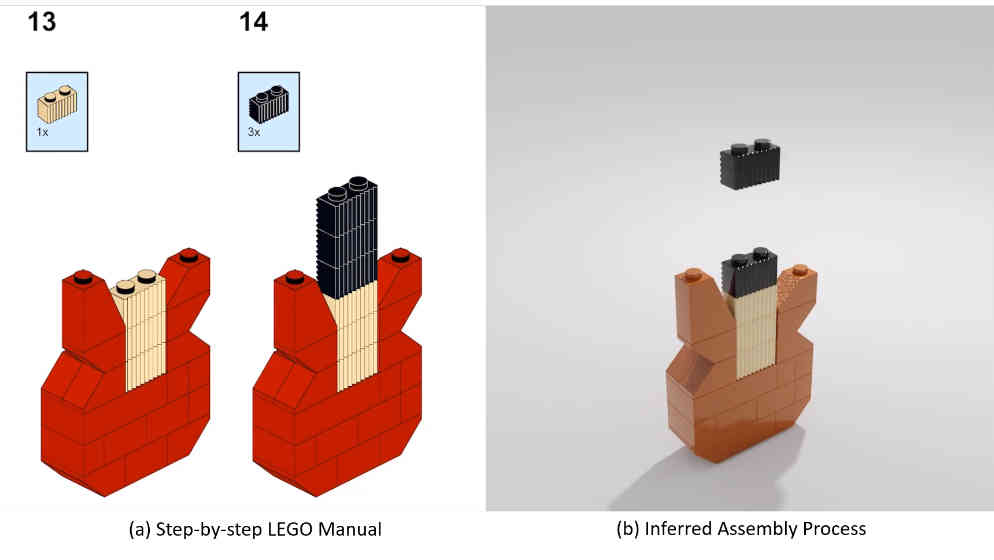

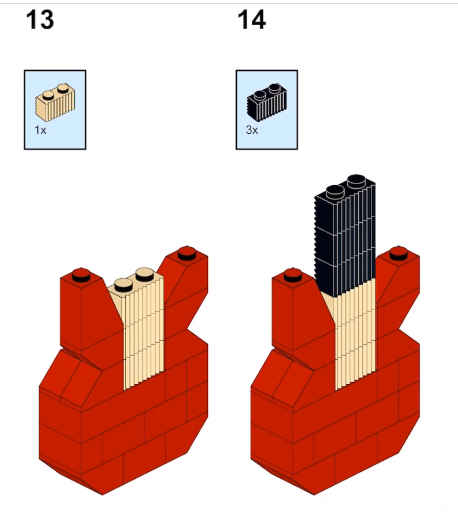

This said, while it might be mostly simple, don't underestimate the amount of intelligence that goes into the task. The image processing alone is something we would have had a lot of trouble with until recently. A team from Stanford, MIT and Autodesk AI has been working hard on the problem: "We identify two key challenges of interpreting visual manuals. First, it requires identifying the correspondence between a 2D manual image and the 3D geometric shapes of the building components. Since each manual image is the 2D projections of the desired 3D shape, understanding manuals requires machines to reason about the 3D orientations and alignments of components, possibly with the presence of occlusions." Of course, the solution involves neural networks and search. The Manual-to-Executable-Plan Network (MEPNet) is a hybrid approach that combines the best of both worlds. The MEPNet has two stages. In the first stage, a convolutional neural network takes as input the current 3D LEGO shape, the 3D model of new components, and the 2D manual image of the target shape. It predicts a set of 2D keypoints and masks for each new component. In the second stage, 2D keypoints predicted in the first stage are back-projected to 3D by finding possible connections between the base shape and the new components. It also refines component orientation predictions by a local search. Take a look at how it works: Systems like this one are the next stage in building complex AI systems. It is no longer sufficient to use a neural network and employ end-to-end training for a task. It is difficult to see how this could work for this task, for example. Neural networks, possibly more than one, will be components in a large system that employs a range of techniques.

The system seems to work well and I wouldn't be surprised if the next step is an AI that interprets flatpack instruction - "no not like that dumb human, connector T4 is used in socket S23 and the leg goes the other way up" If you really want help with your Lego then you can download the code from Github.

More InformationTranslating a Visual LEGO Manual to a Machine-Executable Plan Ruocheng Wang, Yunzhi Zhang, Jiayuan Mao, Chin-Yi Cheng and Jiajun Wu https://github.com/Relento/lego_release Related ArticlesDIY AI To Sort 2 Metric Tons Of Lego Changing Rooms - Let AI Arrange The Furniture Hands-On Lego Robotics For Kids

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Sunday, 07 August 2022 ) |