| BigQuery Now Open to All |

| Written by Alex Denham | |||

| Monday, 07 May 2012 | |||

|

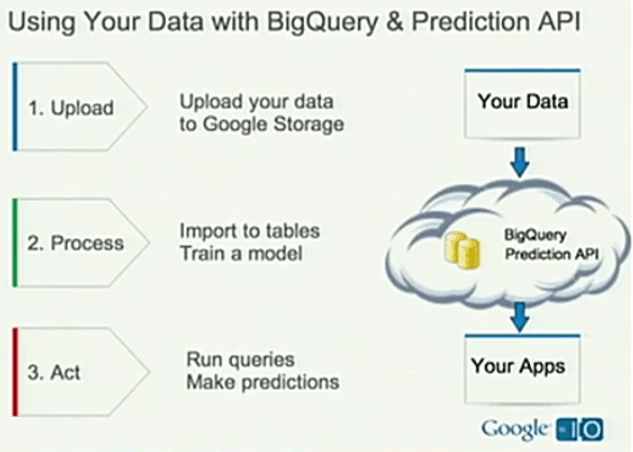

After a period of limited-availability testing, BigQuery, a cloud-based service from Google for analyzing very large sets of data, is now publicly available. Google BigQuery is a web service that allows you to run SQL-like queries against very large datasets, with potentially billions of rows. Designed to be scalable and easy to use, it is intended to let developers and businesses tap into powerful data analytics on demand. BigQuery was originally announced at Google I/O 2010 along with the Google Prediction API. At that time a preview edition, an API that worked with REST and JSON, was available to a limited number of enterprises and developers.

Last November, as we reported, a new release with a graphical user interface was made available, by invitation only, to companies of all sizes. Now it is has become publicly available, as part of Google's endeavour to bring Big Data analytics to all businesses via the cloud.

Developers and businesses can sign up for BigQuery online and query up to 100 GB of data per month for free. There's an introductory pricing plan for storing and querying datasets of up to 2 TB and only after that do you need to contact a sales representative. More InformationGoogle BigQuery brings Big Data analytics to all businesses Related Articles

|

Node.js 22 Adds WebSocket Client 29/04/2024 Node.js 22 has been released with support for requiring ESM graphs, a stable WebSocket client, and updates of the V8 JavaScript engine. |

Important Conference Results 17/04/2024 The SIGBOVIK conference has just finished and its proceedings can be downloaded, but only at your peril. You might never see computer science in the same way ever again. |

More News

|