| Kinect Tracks A Single Finger With A New SDK |

| Written by Harry Fairhead | |||

| Thursday, 04 October 2012 | |||

|

Throw the mouse away - you have a finger! Pointing and grasping are natural things to do with your hand and now a company has found a way to make the Kinect depth camera sensitive enough to detect where you are pointing and what you are grasping. The idea of a "Minority Report" type UI isn't that good - all that arm waving. A much better idea is to control your machine with small hand movements - but these are much more difficult to detect reliably. Now we have a way to do the job using two Kinects and an SDK. 3Gear Systems have just released an SDK that with the help of two Kinect or other depth cameras can detect small finger based gestures.

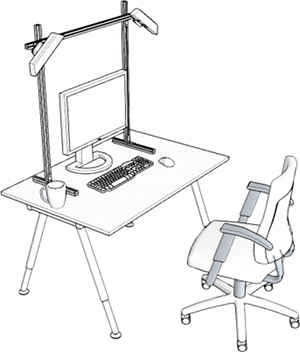

The actual hardware rig look a bit amateur, but who cares? This is clearly an alpha release. You have to lash two Kinects to a fixed frame and then install the SDK. Before you can try the system out, you have to spend a few minutes calibrating it to recognize your hands. Once this is done your details are kept for reuse. Applications can use small gestures involving the figures and wrist, such as pinching and pointing and this is better than the large scale body and arm movements that a single Kinect would respond to. To make this work, we had to develop new computer graphics algorithms for reconstructing the precise pose of the user's hands from 3D cameras. A key component of the algorithm is to use a database of pre-computed 3D images corresponding to each possible hand configuration in the workspace. The 3D image database is efficiently sampled and indexed to enable extremely fast searches. At run-time, the images from the 3D cameras are used to "look up" the pose of the hand using the database. This way, the user's hand pose can be determined within milliseconds — fast enough for interactive applications and a short enough time to avoid the effects of "lag" or high latency. You can see it in action:

The SDK is currently free to download and you can build it into your applications. Currently it only runs under Windows 7 64-bit. Suggested applications include manipulating virtual 3D objects, touch free interaction with dangerous or sterile environments, gaming and of course anything you can think of. Its two shortcomings are that it requires calibration and it only recognizes a fixed set of gestures. The company is working to remove both constraints.

The SDK will be offered in a free version after it is out of beta, but look out for a commercial version. The world of 3D gesture-based input seems to be hotting up at the moment with not only 3Gear Systems but the mysterious Leap Motion sensor that claims amazing accuracy without much of a clue as to how ti works. It is interesting that the 3Gear system uses two Kinects set to view the scene from only slightly different angles. This appears to increase the resolution of the models that can be created and provides a way to map features that would otherwise be obscured from a single viewpoint.

More InformationRelated ArticlesLeap 3D Sensor - Too Good To Be True? The Pen is Mightier than the Finger Handy-Potter - Make Things By Waving Your Hands In The Air Shake n Sense Makes Kinects Work Together!

Comments

or email your comment to: comments@i-programmer.info

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

|

|||

| Last Updated ( Thursday, 04 October 2012 ) |