| Super Seeing - Eulerian Magnification |

| Written by Harry Fairhead | |||

| Monday, 04 June 2012 | |||

|

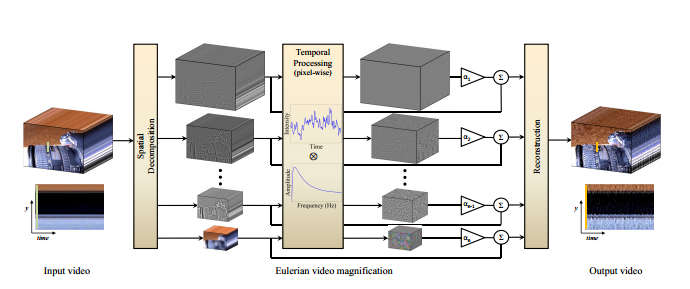

If you have ever wanted the vision of a super hero then Eulerian Magnification, a new image processing algorithm, is just what you have been looking for. It can see your pulse at great distances, and perceive tiny movements that are otherwise invisible. When you view a scene you naturally assume that you are seeing things as they are. However, the human perceptual system, and the eye in particular, is limited in what it can detect - in the jargon it has a limited spatio-temporal sensitivity. The human eye provides you with a sort of smeared out in time view of the world. If it didn't then motion pictures wouldn't work because you would see a sequence of stills rather than a moving image. Even if you know this fact, you probably don't realize how much information is being missed and just how temporally smeared your vision actually is. A group of researchers at MIT CSAIL and Quanta Research Cambridge, MA has applied very fundamental image processing techniques - spatial decomposition and temporal filtering - to standard video to show details that would normally require expensive special hardware to see.

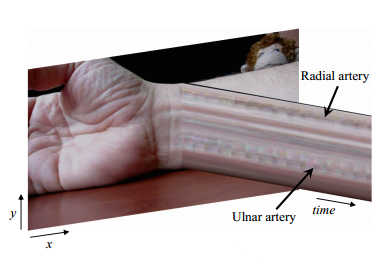

The filter enhances color variations as well as true physical movement and can reveal features that are normally below the noise level of the video and human perceptual system combined. The amazing part of the story is that the techniques used are standard filtering algorithms that could have been applied to this problem years ago. Put simply, if you point it at a human face you can see the color change as the blood pumps in and out in time with the heart beat. Point it at a wrist and you can see the pulse moving the skin. You can guess that the medical applications for this sort of technique are immense, allowing remote monitoring via a standard video camera without the need for the patient to be attached via wires. Watch the video to see it in action:

In case you are wondering what the "Eulerian" is all about, the term comes from fluid dynamics. There are two ways to work with a fluid flow. You can move with the particles and track how they move at different locations, the Lagrangian approach; or you can fix your attention on one spot and describe what happens there, the Eulerian approach. Previous efforts to perform the same analysis had made use of Lagrangian techniques, which are computationally more expensive. The simpler Eulerian approach allows the processing to be done in realtime at 640x480 at 45 frames per second, without the help of a GPU. The technique could be speeded up using a GPU and the method looks as if it could be implemented using FPGs or similar custom hardware.

As well as medical monitoring applications, there are lots of physics and engineering tasks that previously required expensive hardware and complex analysis. The final example in the video of the vibration of a DSLR caused by its mirror being actuated is enough to convince me to use a tripod. The research team promises to publish the code very soon.

More InformationEulerian Video Magnification for Revealing Subtle Changes in the World (pdf)

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Wednesday, 24 December 2014 ) |