| Nao Walks Alone |

| Written by Lucy Black | |||

| Saturday, 03 August 2013 | |||

|

As well as telling jokes, dancing and generally mucking about, Nao is also into real research. A new video shows Nao with a depth camera on his head walking through rough terrain and all on his own. This is what a robot has to master if it is ever to get out more. I don't know about you but whenever I see a robot video my first question is always "how much is pre-programmed". You see a troupe of Naos dancing and you think "these are all pre-programmed moves" or you see Nao doing something cute by way of human interaction and you think "being pre-programmed this is little better than a puppet". If the environment were to change while Nao was performing most of its tricks then the chances are that it would just fall over. These "party tricks" are fun but ultimately provide very limited advances in "robotics".

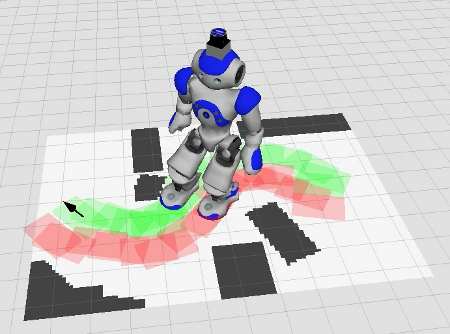

Robots have to be self-contained in the sense that they have to adapt to the environment that they find themselves in. In other words, dancing Naos need to react the music, avoid crashing into other dancers and manage the difficulties of an uneven dance floor and furniture - this is really difficult. Now we have a video from the Freiburg Humanoid Robots Lab that shows that Nao can be smarter than the average robot - even if it is at the cost of wearing a silly looking depth camera on its head. What is happening is that Nao takes a depth image of its environment and then uses a footstep planner to work out where to put its foot next so as to avoid obstacles and not fall over. When you watch this video notice that it has been speeded up. In real life Nao's progress would seem painfully slow. Although I Programmer has criticized slow robots in other contexts, in this case speed isn't the issue - speeding up the software is something that can be worked on once the software is right.

The depth camera on Nao's head is an Asus Xtion Pro Live, which is a Kinect clone. In previous experiments Nao used a laser depth scanner which was much more accurate but much more expensive. "From the depth data, our system estimates the robot's pose and maintains a height-map representation of the environment. Based on this model, our technique iteratively computes sequences of safe actions including footsteps and whole-body motions, leading the robot to target locations. Hereby, the planner chooses from a set of actions that consists of planar footsteps, step-over actions, as well as parameterized step-onto and step-down actions. To efficiently check for collisions during planning, we developed a new approach that takes into account the shape of the robot and the obstacles." The footstep planning software is available in the ROS repository and can be used by any robot that runs ROS. If this can be improved on we can look forward to robots marching around our houses without any difficulties and this really would be a big step forward - pun most certainly intended. More InformationDetails can be found in an upcoming IROS 2013 Paper:

Related ArticlesDARPA'S ATLAS Robot Needs A Brain NAO Works With Autistic Children REEM-C A Full Size Humanoid Robot You Can Buy!

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

|||

| Last Updated ( Saturday, 03 August 2013 ) |