| Mars Curiosity Rover Gets A Software Update |

| Written by Harry Fairhead | |||

| Sunday, 12 August 2012 | |||

|

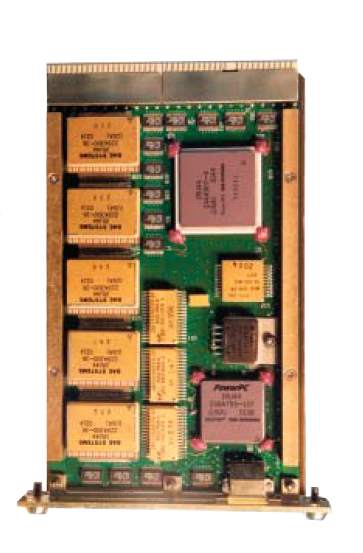

After surviving its seven minutes of terror - the landing - Curiosity is now being subjected to a few days of code terror. It is currently receiving a software update in a sort of "patch" weekend. The first thing you want to do when you get somewhere new is update your software. Now this is fine when you have just gone a few hundred miles, but Curiosity is on the surface of Mars. If you have worried about pushing out new code to users, then this should put the whole operation in context. Curiosity only has a redundant pair of fairly low power computers to run its entire system. Why use such low-powered computers is a common question - the answer is that the hardware has to be radiation hardened and reliable. You don't want to send some complex CPU into space, only to discover that it has the equivalent of the Pentium floating point bug. The hardware is a pair of RAD-750 microprocessors from BAE systems which cost around $200,000 each. The CPU is based on the PowerPC 750 with a 200MHz clock, 256MBytes of RAM and 2GB of flash.

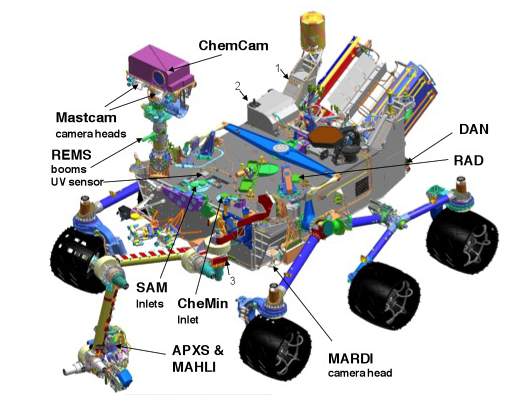

The operating system has also been chosen for reliability. It is VxWorks, which is used in many systems that require high reliability. It is based on a traditional multitasking kernel using round-robin scheduling and interrupt support. Code development is via a Workbench based on Eclipse and in C or C++. Overall it isn't luxury from either a hardware or a software point of view, but it is a lot better than the systems used for the Apollo landings. Even so, the hardware isn't big enough to hold all of the code needed to land and run the rover. So the landing software was in control for the seven minutes of terror and now it is being upgraded with the full working "smart" software that will run the robotic mission semi-autonomously. The Rover Team is calling it a brain transplant, but that makes it sound almost reasonable. The upgrade should occur between August 10-13, and so far (12th) it seems to be going well. Ben Cichy the chief software engineer for the rover said: "We designed the mission from the start to be able to upgrade the software as needed for different phases of the mission, The flight software version Curiosity currently is using was really focused on landing the vehicle. It includes many capabilities we just don't need any more. It gives us basic capabilities for operating the rover on the surface, but we have planned all along to switch over after landing to a version of flight software that is really optimized for surface operations." The new software has image processing code designed to allow it to avoid hazard, i.e. rocks and holes, without earth intervention; and code to manipulate the many tools and systems. The time delay for round trip transmission between Mars and Earth is such that it isn't possible to use a "telepresence" approach to driving the robot. You either have to work in a stop-go mode, as with the Spirit and Opportunity rovers where you look, plan and execute a dive and then evaluate what happened, or you need some level of on-board planning. The idea is that the team will tell the rover where it is to and it will plan the route using the "hazcams" to recognize any obstacles or local dangers as it drives along. The software has already been tested to some extent by being uploaded to the earlier rovers, Spirit and Opportunity. It has the ability to navigate on its own for around 50m (150ft). Comparing this to the ability of Google's self driving car to navigate without any help, this too seems a little under powered. Once again, however, what matters is reliability and in space autonomous robots lag behind their earthly counterparts. I can only hope that the code is smart enough to drive the rover over the surface without putting it nose first into a crater - that would not be good for the image of software or AI.

While the download is being performed, the rover is effectively out of action as it test various sub-systems. The rover team is keeping itself busy studying photos sent back earlier to see what should be explored first, once "patch weekend" is completed.

More InformationRelated ArticlesProgram Curiosity With Kodu On Mars

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Sunday, 21 June 2020 ) |