| Google has a way of taking the shake out of your video |

| Written by David Conrad |

| Sunday, 10 July 2011 |

|

Software video stabilization is a great way to avoid making your viewers feel ill while watching your creation but with the right algorithm it can also provide the camera motions a great director would have used. This isn't a theoretical advance, it is in use now on YouTube. Sometimes you find a video that is really interesting but watching it is vomit-inducing because of the lack of attention to keeping the camera steady. This is a particular problem for casual videographers because they don't use the heavy tripods, dollies and steady cams used by the professional. Even the size of the typical video camera, often just a mobile phone, works against a steady viewpoint as its low mass bounces around in the hand. Ever taken a video while walking? If you have you will know the regular up and down that induces motion sickness in any viewer. OK - time to do something about it and the solution is software video stabilization. Yes you can waste your time trying to make the original better, but why bother when software can do the job and turn you into a videographer of quality. Given that stabilization amounts to a virtual re-shoot of the video why not use the principles of the great directors? Google Research has worked out an algorithm that can stabilize a video after it has been uploaded using a new camera path that conforms to the principles fo great cinematography. Any stabilization algorithm has to follow three, fairly obvious but difficult-to-implement steps.

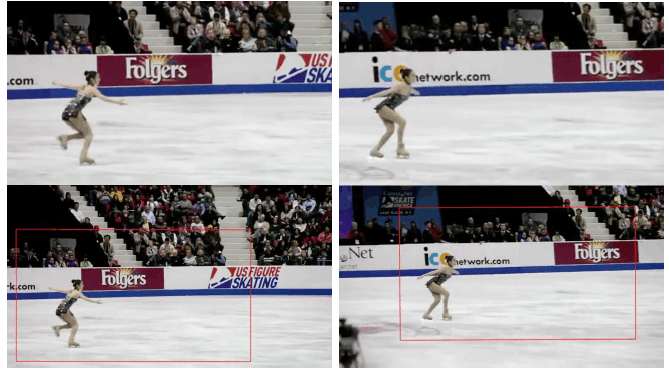

Once you have the new path the simplest way to transform the video is to move a cropping frame, slightly smaller than the original video along the path.

The only problem occurs if the cropping frame moves outside of the original. Then you have to either invent new material to fill in the missing parts of the frame or put up with blank edges. The key breakthrough in the new algorithm is the construction of a smooth path that tries to incorporate cinematic principles. First the path of the camera is estimated and this is broken into segments corresponding to constant, linear and parabolic paths. These are assumed to correspond to the traditional camera paths as used by the most experienced directors: From a cinematographic standpoint, the most pleasant viewing experience is conveyed by the use of either static cameras, panning ones mounted on tripods or cameras placed onto a dolly. Changes between these shot types can be obtained by the introduction of a cut or jerk-free transitions, i.e. avoiding sudden changes in acceleration. Once you realize that the parabolic path is one of constant acceleration you can see that splitting the path up in this way give you the best approximation to what a skilled director might have done. Nice idea but how do you do it? The proposed smooth path has to fit the actual path reasonably well otherwise it is going to be impossible to "re-shoot" the video without the cropping frame moving out of shot and blank borders appearing. The method used finds an optimal division of the actual path by performing a constrained optimization. The constraints are to keep the cropping frame within the frame - so avoiding the problem of blank edges - and to be as close to the original path as possible. In addition it is also possible to add "saliency" constraints which insist that important features - such as faces - move smoothly in the frame. The algorithm, which uses a general linear programming approach, is flexible enough to include a range of novel constraints. For example, if a frame has a motion blur then then moving the camera in the direction of that blur makes the result look better. If you want to try out the algorithm you can at the YouTube Video Editor. And see it in action in the video below:

The real time preview is created using a distributed processing implementation on Google's hardware. The only parameter you can adjust is the size of the crop window. The smaller the crop window the more freedom the algorithm has to find a stabilized path that keeps in within the image. Of course a smaller crop window reduces what you can see. The resulting path is optimal for the crop window selected - but it is suggested that this too could be added to the optimization. More infomationAuto-Directed Video Stabilization with Robust L1 Optimal Camera Paths

If you would like to be informed about new articles on I Programmer you can either follow us on Twitter or Facebook or you can subscribe to our weekly newsletter.

|

| Last Updated ( Sunday, 10 July 2011 ) |

The cropping frame can be seen in the bottom row moving along the optimal path

The cropping frame can be seen in the bottom row moving along the optimal path