| A Worm's Mind In A Lego Body |

| Written by Lucy Black | |||

| Sunday, 16 November 2014 | |||

|

Take the connectome of a worm and transplant it as software in a Lego Mindstorms EV3 robot - what happens next? It is a deep and long standing philosophical question. Are we just the sum of our neural networks. Of course, if you work in AI you take the answer mostly for granted, but until someone builds a human brain and switches it on we really don't have a concrete example of the principle in action.

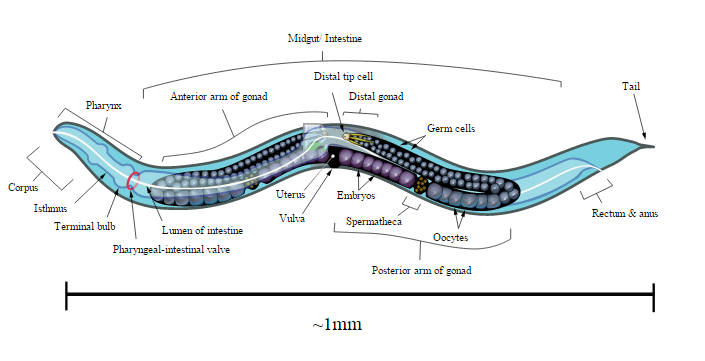

The nematode worm Caenorhabditis elegans (C. elegans) is tiny and only has 302 neurons. These have been completely mapped and the OpenWorm project is working to build a complete simulation of the worm in software. One of the founders of the OpenWorm project, Timothy Busbice, has taken the connectome and implemented an object oriented neuron program.

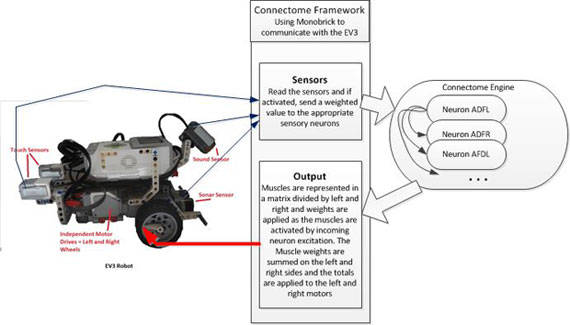

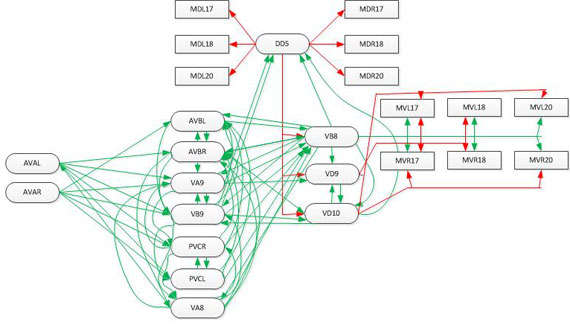

The model is accurate in its connections and makes use of UDP packets to fire neurons. If two neurons have three synaptic connections then when the first neuron fires a UDP packet is sent to the second neuron with the payload "3". The neurons are addressed by IP and port number. The system uses an integrate and fire algorithm. Each neuron sums the weights and fires if it exceeds a threshold. The accumulator is zeroed if no message arrives in a 200ms window or if the neuron fires. This is similar to what happens in the real neural network, but not exact. The software works with sensors and effectors provided by a simple LEGO robot. The sensors are sampled every 100ms. For example, the sonar sensor on the robot is wired as the worm's nose. If anything comes within 20cm of the "nose" then UDP packets are sent to the sensory neurons in the network. The same idea is applied to the 95 motor neurons but these are mapped from the two rows of muscles on the left and right to the left and right motors on the robot. The motor signals are accumulated and applied to control the speed of each motor. The motor neurons can be excitatory or inhibitory and positive and negative weights are used.

And the result? It is claimed that the robot behaved in ways that are similar to observed C. elegans. Stimulation of the nose stopped forward motion. Touching the anterior and posterior touch sensors made the robot move forward and back accordingly. Stimulating the food sensor made the robot move forward. Watch the video to see it in action.

The key point is that there was no programming or learning involved to create the behaviors. The connectome of the worm was mapped and implemented as a software system and the behaviors emerge. The conectome may only consist of 302 neurons but it is self-stimulating and it is difficult to understand how it works - but it does.

Currently the connectome model is being transferred to a Raspberry Pi and a self-contained Pi robot is being constructed. It is suggested that it might have practical application as some sort of mobile sensor - exploring its environment and reporting back results. Given its limited range of behaviors, it seems unlikely to be of practical value, but given more neurons this might change. Is the robot a C. elegans in a different body or is it something quite new? Is it alive? These are questions for philosophers, but it does suggest that the ghost in the machine is just the machine.

For us AI researchers, we still need to know if the principle of implementing a connectome scales. More InformationThe Robotic Worm (Biocoder pdf - free on registration) Related ArticlesOpenWorm Building Life Cell By Cell Survey Reveals American Public Suspicious Of Tech Nobel Prize For Computer Chemists AI Methods Save The Biosphere! A New Computational Universe - Fredkin's SALT CA

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info |

|||

| Last Updated ( Sunday, 16 November 2014 ) |