| ConvNetJS - Deep Learning In The Browser |

| Written by Mike James |

| Monday, 07 July 2014 |

|

ConvNetJS brings deep neural networks to your browser. It's easy to use and fast without using a GPU, which means it works almost anywhere. This is a project created by Andrej Karpathy, a Phd Student at Stanford, in an effort to make neural networks more accessible. No GPUs are used to make things go faster but the raw JavaScript seems to do the job very well. This is another example of how the ever increasing performance of JavaScript is making new things possible.

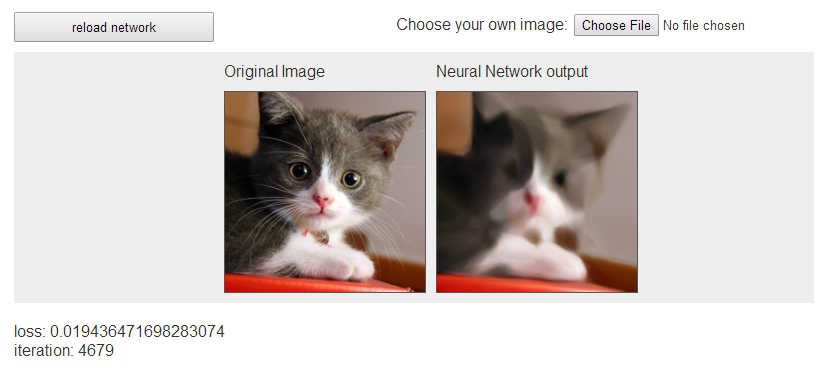

In this case we have a framework for creating and training your network. You can build up a network by specifying layers of various types. As one of the types is convolutional, you can build networks that recognize images. However. convolutional image recognizers aren't the only possibility and you can create general classifiers, regression prediction networks, and more. Once you have the network definition you can train it using backprop or to minimize a sum of squared errors to learn arbitrary data in regression applications. There is also a MagicNet training class that handles the training automatically for you. If you want to be cutting edge then you could even try out the Deep Q reinforcement learning class to see if you can learn to play games given only the outcome. If you just want to see neural networks in action there are nine demos that you can run in your browser. As mentioned earlier, the fact that no GPU is required means that these are very likely to work and in the browsers I've tried they work remarkably quickly. They are also very well presented. You get a graph of the error (Loss) as the network trains and you can change the usual learning parameters dynamically. Scrolling down reveals a section that provides insights into how the network is doing the job. You can see the features being used to distinguish between the examples. Finally you get a sample of the network's performance based on what it does to a number of test cases.

You just knew there would have to be a cat involved somewhere! In this case the network is learning to reconstruct the photo from a smaller set of weights.

If you want to keep the network configuration you can save it as a JSON file. Similarly, if you have access to a network data you can load it as a JSON file and try it out. So, for example, if you have access to the weight data for a network trained for weeks using a GPU array you can still run the network in a browser and use it to classify things. The whole project is open source and you can get the code from GitHub. A good and very useful piece of work.

More InformationRelated ArticlesThe Flaw Lurking In Every Deep Neural Net Google Explains How AI Photo Search Works Deep Learning Finds Your Photos Deep Learning Researchers To Work For Google Google's Deep Learning - Speech Recognition A Neural Network Learns What A Face Is Speech Recognition Breakthrough

To be informed about new articles on I Programmer, install the I Programmer Toolbar, subscribe to the RSS feed, follow us on, Twitter, Facebook, Google+ or Linkedin, or sign up for our weekly newsletter.

Comments

or email your comment to: comments@i-programmer.info

|

| Last Updated ( Monday, 07 July 2014 ) |