| AI Security At Online Conference: An Interview With Ian Goodfellow |

| Written by Sue Gee | |||

| Saturday, 04 March 2017 | |||

|

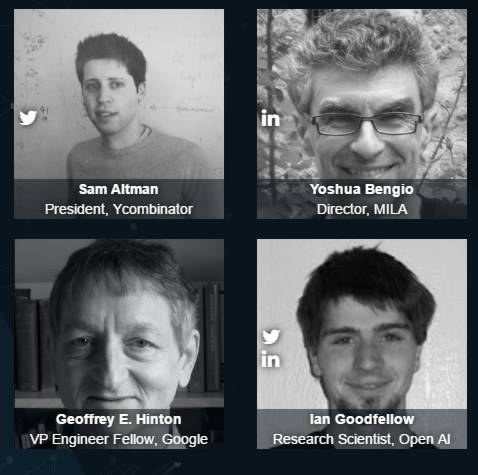

To find out more about the forthcoming AI With the Best online event we talked with Ian Goodfellow who is one of the event's keynote speakers. We also took the opportunity to find out more about his areas of research, Generative Adversarial Networks and machine learning security, and to ask some questions about neural networks in general. IProgrammer: We understand you are a third-time speaker for AI With the Best and are helping to organize the event which takes place online, something that seems to becoming quite popular. Ian Goodfellow: Yes, online conferences offer several benefits compared to in-person conferences. It takes less time and money to attend. They cause less global warming. It's more natural for a large audience to interact with a single speaker. Presentations can be paused and replayed. The presentations become available to the rest of the world sooner. I'm volunteering as an adviser after running the Self-Organizing Conference at OpenAI last year and my role is to help choose the speakers and organize the schedule. IP: Finding 100 speakers to cover four tracks seems a bit ambitious, is it working out? Ian Goodfellow: We're doing well finding speakers, Geoff Hinton, Yoshua Bengio, and Sam Altman, all of whom are keynote speakers, all agreed to speak very early on. [UPDATE: Hinton no longer seems to be participating. According to the complete agenda the first keynote will be given by Google Brain researchers, Fernanda Viégas and Martin Wattenberg]. [For the current list of over 70 speakers see http://ai.withthebest.com/]

IP: For the paying attendees what would be the most exciting things about the program you are putting together? Ian Goodfellow: Attendees should definitely book 1:1 sessions with the speakers, that's one of the really great things about an online conference as opposed to a youtube video, the software platform allows for interaction. IP: Will all the speakers offer this? Ian Goodfellow: Each speaker is free to choose how many slots they make available. i'm not sure if Geoff and Yoshua will choose to do that, but I'll encourage them to. The 1:1 sessions can be enjoyable and useful for both the speakers and the attendees. Last year i met some really interesting attendees, like an astronomer who was using generative models to study the distribution of dark matter. IP: Your talk at last year's AI With the Best, which is in the video below was on GANs. Can you give a very brief explanation of that concept. Ian Goodfellow: A GAN is a machine learning model that can generate new data that resembles the training data. For example, after training on a dataset containing pictures of dogs, a GAN could generate a new picture of an imaginary dog that has never been seen before.

IP: This year you are a keynote speaker. What's your talk about? Ian Goodfellow: My keynote talk, Machine learning security and privacy, will be about the rapidly growing field of machine learning security. I'll give a brief overview of this emerging field, focusing on my colleagues' recent work in differential privacy. IP: Is security your current area of research or are you going further with adversarial training? Ian Goodfellow:I work on both topics they are closely related for machine learning security, we study how to fool machine learning models right now, several companies are interested in using deep learning for malware detection the problem is that machine learning models are easy to fool so the next generation of malware will just fool these machine learning malware detectors for example, there's a new algorithm called MalGAN (https://arxiv.org/abs/1702.05983). It uses a GAN to generate malware that fools a malware detector into thinking it is legitimate software IP: So you need to outwit the fraudsters Ian Goodfellow: Yes, there is an arms race between attackers and defenders, new machine learning capabilities help both sides, machine learning can automate the fraud detection, but it can also automate the fraud IP: Are there any easy fixes or will this be something that takes a lot of time? Ian Goodfellow: It will take some time, there is a lot of work to do, both in terms of theory and in terms of application. On the theory side, we don't yet have a theory explaining how the arms race will end for example, in cryptography, if you implement your encryption correctly, and keep your password secure, it's not feasible for the attacker to read your encrypted messages the interesting thing about encryption is that, if it's done right, the defender wins. We still don't know if it's possible to guarantee something similar for machine learning. Right now, if you take a state of the art machine learning algorithm, and train it to detect malware, it will still be very easy to fool. We could hope that someday, it might be possible to build a malware detector that no one can fool. At the moment, we don't actually have any mathematical theory that tells us whether that is or is not possible. One of the big research problems in machine learning security today is just figuring out how much we can hope for. IP:There seems to be something of a paradox here. On the one hand we are striving for AI that can fool us into thinking it is human and at the same time we are uncovering new dangers. Machine learning seems to introduce a whole new class of vulnerabilities in the form of adversarial examples. Do you think that it might be possible to find ways to protect NNs against all such attacks or is the problem an unavoidable part of the model? Ian Goodfellow: This is an important open research question. We would like to be able to write a theorem that tells us how much we can hope to defend against adversarial examples. So far we do not have much theoretical guidance. In practice, it has been much harder to defend than to attack.

IP: Does the fact that AI is often delivered as a cloud based service make it easier to attack?

Ian Goodfellow: No, as long as the service provider takes appropriate security precautions it is better to have everything in the cloud so that there is only one system to keep defended and up to date.

IP: Are other approaches to AI such as SVMs, decision trees etc, all subject to similar problems?

Ian Goodfellow: Yes. In fact, in many cases, the same input example will fool several different machine learning algorithms.

IP: If people who are already into their careers, rather than starting out as students, want to get into machine learning what would you recommend as a route.

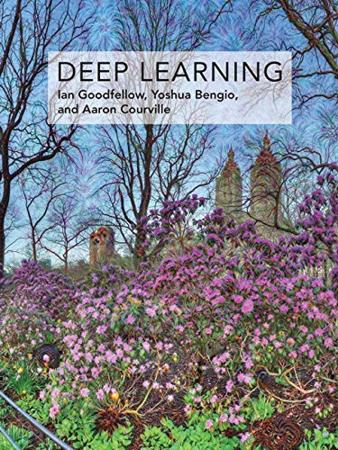

Ian Goodfellow: Read the deep learning textbook [which Ian co-authored with Yoshua Bengio and Aaron Courville] and simultaneously look for a machine learning project they can use for practice. Machine learning is everywhere these days, so it should be possible to find a machine learning project within almost any career machine learning is everywhere these days, so it should be possible to find a machine learning project within almost any career. And attend AI With the Best, of course. Tickets for this event, which let you attend the event and have access to replays for 15 days, booked before April 16th cost $60 ($20 for students). But if you book via this link using the code IPROGRAMMER you can take advantage of a discount - 50% until March 14th and 25% after that date.

More InformationRelated ArticlesAI With The Best Online With Geoffrey Hinton //No Comment - Quantized Neural Networks,Generating Faces & cleverhans v0.1

To be informed about new articles on I Programmer, sign up for our weekly newsletter, subscribe to the RSS feed and follow us on Twitter, Facebook or Linkedin.

Comments

or email your comment to: comments@i-programmer.info <ASIN: 0262035618>

|

|||

| Last Updated ( Friday, 28 April 2017 ) |