| Principles Of Execution - The CPU |

| Written by Harry Fairhead | |||||

| Thursday, 13 October 2022 | |||||

Page 3 of 4

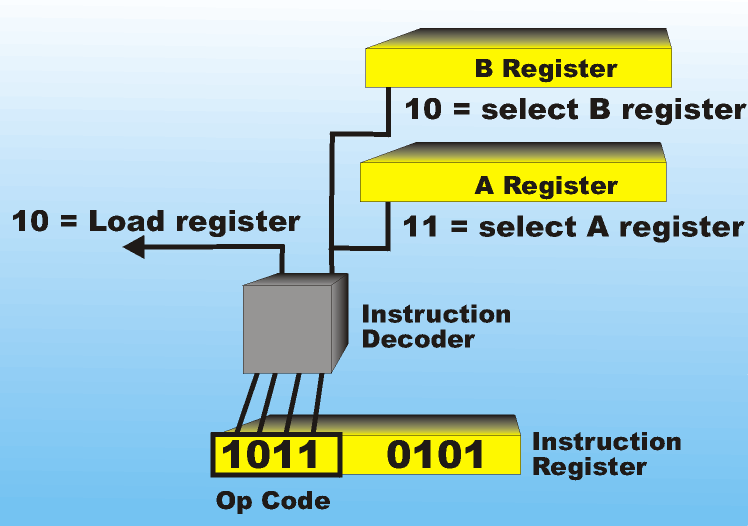

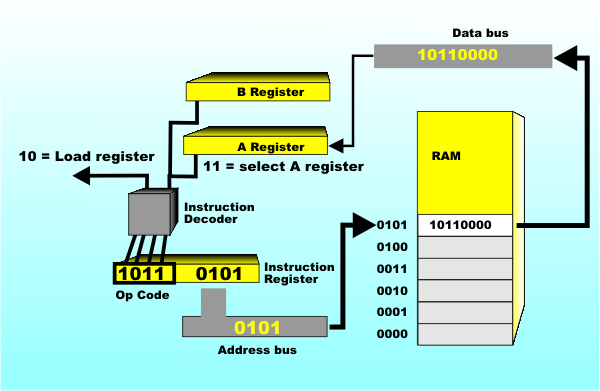

Op-codeThe op-code isn’t just any old number; it has a structure. In our example the first two bits of the op-code act as a mini address that selects which register the operation is going to use – 11 A register, The top two bits give the operation to be performed on the register that is selected – e.g. 10 = load the register, You can see that there is nothing magic in the instruction decoder. The lower two bits are fed into a set of And/Or gates that provide a selection signal to the correct register, i.e. one of the four possible. The upper two bits are similarly decoded using And/Or gates to derive a signal that makes the register “latch” or load whatever is on the data bus. As the processor becomes more complex there are more registers to select from and more operations to set up. However the operation of the instruction decoder is always the same. It picks out parts of the op-code and decodes them to a small number of control lines which change what logic elements are selected or deselected within the CPU. The Instruction decoder is a set of logic gates that configure the hardware of the processor depending on the bits that are set or zeroed in the instruction register. Each set of bits feeds a set of logic gates that output of which set the other components in the processor to do the job that the op code represents. Just as a memory automatically delivers the correct data when you present it with and address the processor automatically does the operation that the op code represents. When the op code is ADD A to B the bits in the code automatically set up the logic to connect the two registers to the inputs of an arithmetic unit. The op code drives the processor. Address FieldSo now you can see that the execute phase works in an entirely automatic way to load the A register, but from where? The answer is usually that there is a second field in the op-code which is treated as an address. The address portion of the op-code is placed on the address bus at the start of the execute cycle and this determines which memory location the register in question is loaded from or stored to.

Notice that what gets placed on the address bus depends on what register is active. During the fetch cycle it is the PC register that is active and it drives the address bus and the instruction register latches what is on the data bus. During the execute cycle it is the instruction register that is active and it drives the address bus and one of the registers latches what is on the data bus. It really is very simple. All we need to do now is put it all together. Fetch-Execute CycleNow we have a complete picture of what happens during the execute phase and we can even add to the description the necessary delays while everything settles down. Everything happens at times determined by the system or processor clock. Exactly when everything happens varies according to the particular type of processor but usually the rising and falling edges of the clock pulse are used to mark the moment things happen. For a register load instruction that has just been placed in the instruction register by the previous fetch this would be:

And, of course, after the execute phase there follows another fetch and so on until the program is complete. This is the basic principle of the computer and the way that the CPU works. You don’t need any more hardware or additional operating principles to make a machine that does most of the things you need. For example, to add two numeric values you don’t need a special instruction that adds two memory locations together. All you need is the instruction that loads the A register and one that adds the contents of a memory location to the A register’s current contents. Notice that you don't need an additional sort of clock cycle in addition to the fetch and execute cycles. The add hardware is implemented so that instead of loading the register with the contents of the data bus the value on the data bus is added to the register. The operation of repeatedly adding values to the A register, i.e. “accumulating” a sum, is where the A register derives its name from. The extra hardware needed to make the A register into an accumulator is simply a full adder that adds the value on the data bus to the value on the output of the A register. The output of the full adder is fed back into the input of the A register.

If you don’t want to make things complicated you can even use the same hardware arrangement to implement a “Load A” operation by simply blocking the output of the A register during an “Add to A” operation. In practice, though, a modern processor will have a number of internal buses connecting the registers to the Arithmetic and Logic Unit or ALU – of which our full adder is just the beginning. Question: what does a half adder want to be when it grows up? Answer: a full adder Question: what does a full adder want to be when it grows up? Answer: an ALU… If you don't know what a half adder is see: Binary Arithmetic <ASIN:0789736977> <ASIN:0972380175> <ASIN:0471073253> <ASIN:059600866X> <ASIN:0767917073> |

|||||

| Last Updated ( Thursday, 13 October 2022 ) |

The instruction decoder provides signals to select the register and determine what it does

The instruction decoder provides signals to select the register and determine what it does The address field selects a memory address to be used in the operation (click to enlarge)

The address field selects a memory address to be used in the operation (click to enlarge)

How to accumulate

How to accumulate